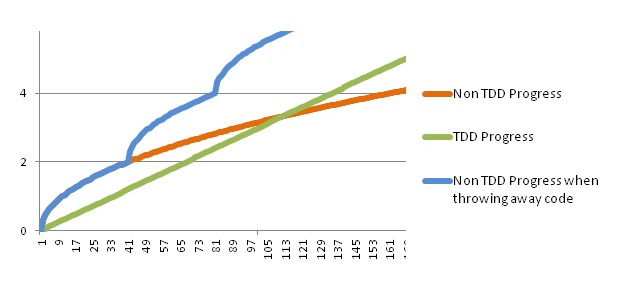

All over the web you find debates whether early stage startups should do Test-Driven Development (TDD). TDD is a development process where developers first write a test before writing any code. When the test is there, they first run the test to prove that it fails when the functionality is not there yet. After that they start implementing the functionality and continue until the test passes. TDD should result in higher quality and more maintainable code. TDD takes more time in the beginning, compared to just writing code directly. But when the complexity increases and there are no tests, things start to break and the velocity of the team will drop. However, with TDD the team will keep going with a somewhat more constant velocity for a much longer time. This is illustrated in the figure below with the green and orange curves.

There are plenty of people who feel that a startup should not do TDD, because it slows down the development in the beginning. The only goal of a startup is to find product-market fit as quickly as possible. Probably 90% of the code is anyway going to trash in the beginning. Progress is measured by validated hypotheses and not features, lines of code or anything else. The usual argument is that things change too quickly and the built MVP is anyway so simple that the benefit from TDD will not show off, since the code will be in trash before the velocity starts to drop. The blue curve in the diagram below shows theoretically the progress made when all code is thrown away after each experiment. One will have the opportunity to start fresh each time with no technical debt, so the average velocity will be quite high. This can probably only be done for the first experiments, after which there will be a better understanding of the users/customers and code reuse will start to pay off.

Some TDD fanatics argue that TDD actually speeds up development no matter what. The developers just have to learn to do it efficiently. Additionally, maintainability of the code is considered important in a chaotic environment where things change quickly. A final argument is that once product-market fit is found, it will be hard to take things forward and scale up when there is an enormous technical debt. This holds true for most software. The only exception would be apps, where the market risk is so high that one needs to start validating hypotheses with some really simple tests that go to trash immediately like illustrated with the blue curve in the diagram above. Completely new concepts for new markets meet these criteria.

Another common argument is that the software can be a bit buggy as long as it is good for validating a hypothesis. I read an interesting comment in Hacker News from a user called DanielBMarkham, that “technical debt can never exceed the economic value of your code, which in a startup is extremely likely to be zero”. I think this should be understood so that the cost of a rewrite is very low in a startup. This should not be be mixed with quality. Low quality with bugs will be a lot more costly than what was invested in writing the software. Even if the software is just a simple test of a business hypothesis, a bug may result in wrong measurements, which will eventually result in wrong business decisions.

How high quality do you need in your MVP? Let’s first look at what quality means. Colin Kloes wrote a good blog post about the importance of a team’s shared understanding of quality. He said “Software quality is characterised by how well the software has solved the user’s problem.”. Now think about Steve Blank’s definition of a startup: “A startup is a temporary organization designed to search for a repeatable and scalable business model”. The only purpose of the software is to help the founders find a repeatable and scalable business model. The software itself is the test; testing the business model that the founders have come up with. This means that quality is defined by how well the software is able to help the founders test their business model hypotheses.

In order to be able to test hypotheses, it is vital that all analytics are correct. A bug in the tracking of some important event that we are testing will result in wrong measurements, which may result in the wrong decisions taken by the founders. Also if there is a bug making the software unusable, the founders will never know if users were not using it because of a bug or because they were not interested. By putting it this way, quality is suddenly more important than ever.

Usually MVPs do not have much complex code, mostly just getters and setters. On the web they may sometimes be mostly html/css with some data retrieved from the database. In these cases there will not be a need for many unit tests. However, it is still a very good idea to have the TDD attitude from start. You do not know in which direction the MVP is going to evolve. You might want to have a couple of automated acceptance tests around the most important features to be sure they work as supposed to, ensuring your experiments are conducted correctly. Also, by doing this you will have the necessary infrastructure already in place to scale up operations when product-market fit is found.

In many cases the first MVP can be just an almost static HTML-page as an experiment to see how many users would sign up for a new concept. Some concepts can also be tested with something built on a CMS or even just static HTML-pages updated manually by a user. In these cases it would not make any sense to do TDD. However, I think these are special cases and at some point the founders are going to need own code to validate hypotheses further.

The software a startup is building, no matter whether it is an MVP or not, will have a purpose. If the software fails to deliver what it is meant to do, it is useless. Thus, bad quality is not acceptable. My conclusion is that the software is an important tool for the founders to validate their hypotheses. If the tool breaks or the technical debt becomes so high that it is no longer possible to use it for validating hypotheses with quick experiments, the founders will not be able to do their job of finding a repeatable and scalable business model.